projects:asgd:start

Annealing SGD

Annealed Stochastic Gradient Descent (AGD)

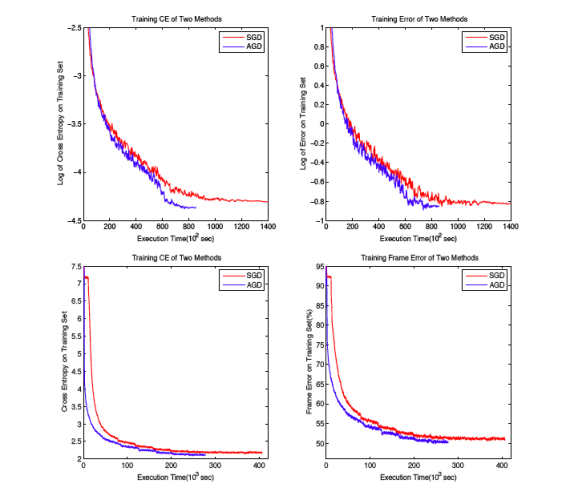

Here, we propose a novel annealed gradient descent (AGD) method for

deep learning. AGD optimizes a sequence of gradually improved smoother mosaic

functions that approximate the original non-convex objective function according

to an annealing schedule during optimization process. We present a theoretical

analysis on its convergence properties and learning speed. The proposed AGD

algorithm is applied to learning deep neural networks (DNN) for image recognition

in MNIST and speech recognition in Switchboard.

Reference:

[1] Hengyue Pan, Hui Jiang, “Annealed Gradient Descent for Deep Learning”, Proc. of 31th Conference on Uncertainty in Artificial Intelligence (UAI 2015), July 2015. ( here)

projects/asgd/start.txt · Last modified: by hj