FSMN

Feedforward Sequential Memory Networks (FSMN)

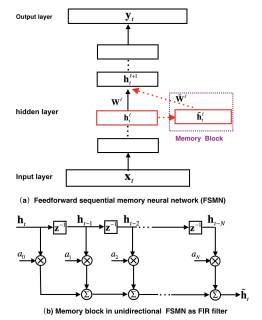

Feedforward sequential memory networks (FSMN) is a novel neural network structure to model long-term dependency in time series without using recurrent feedback. The proposed FSMN is a standard fully-connected feedforward neural network equipped with some learnable memory blocks in its hidden layers. The memory blocks use a tapped-delay line structure to encode the long context information into a fixed-size representation as short-term memory mechanism. We have evaluated the proposed FSMNs in several standard benchmark tasks, including speech recognition and language modelling. Experimental results have shown FSMNs significantly outperform the conventional recurrent neural networks (RNN), including LSTMs, in modelling sequential signals like speech or language. Moreover, FSMNs can be learned much more reliably and faster than RNNs or LSTMs due to the inherent non-recurrent model structure.

Project website:

Reference:

[1] S. Zhang, H. Jiang, S. Wei, L. Dai, “Feedforward Sequential Memory Neural Networks without Recurrent Feedback,” arXiv:1510.02693.

[2] S. Zhang, C. Liu, H. Jiang, S. Wei, L. Dai and Y. Hu, “Feedforward Sequential Memory Networks: A New Structure to Learn Long-term Dependency,” arXiv:1512.08301.