projects:hope:start

HOPE

Hybrid Orthogonal Projection & Estimation (HOPE)

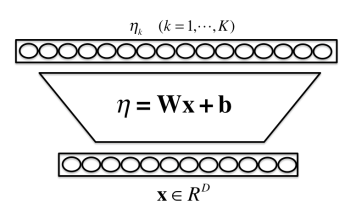

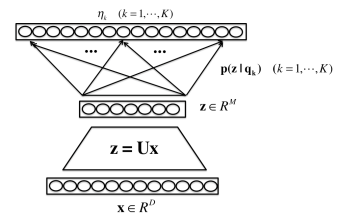

Each hidden layer in neural networks can be formulated as one HOPE model [1]

- The HOPE model combines a linear orthogonal projection and a mixture model under a uni fied generative modelling framework;

- The HOPE model can be used as a novel tool to probe why and how NNs work;

- The HOPE model provides several new learning algorithms to learn NNs in either supervised or unsupervised ways.

Reference:

[1] Shiliang Zhang and Hui Jiang, “Hybrid Orthogonal Projection and Estimation (HOPE): A New Framework to Probe and Learn Neural Networks,” arXiv:1502.00702.

[2] Shiliang Zhang, Hui Jiang, Lirong Dai, “The New HOPE Way to Learn Neural Networks”, Proc. of Deep Learning Workshop at ICML 2015, July 2015. (paper)

[3] S. Zhang, H. Jiang, L. Dai, “ Hybrid Orthogonal Projection and Estimation (HOPE): A New Framework to Learn Neural Networks,” Journal of Machine Learning Research (JMLR), 17(37):1−33, 2016.

Software:

The matlab codes to reproduce the MNIST results in [1,2] can be downloaded here.

<script> (function(i,s,o,g,r,a,m){i['GoogleAnalyticsObject']=r;i[r]=i[r]||function(){ (i[r].q=i[r].q||[]).push(arguments)},i[r].l=1*new Date();a=s.createElement(o), m=s.getElementsByTagName(o)[0];a.async=1;a.src=g;m.parentNode.insertBefore(a,m) })(window,document,'script','//www.google-analytics.com/analytics.js','ga'); ga('create', 'UA-59662420-1', 'auto'); ga('send', 'pageview'); </script>

projects/hope/start.txt · Last modified: by hj