FOFE

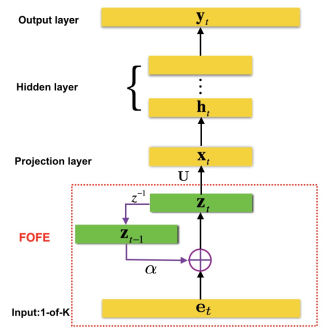

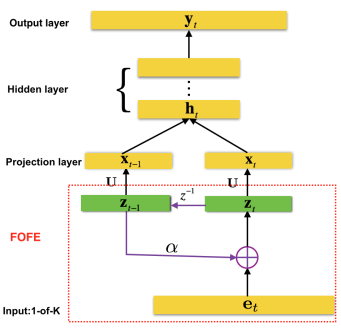

Fixed-size Ordinally-Forgetting Encoding (FOFE)

FOFE

is a simple technique to (almost) uniquely map any variable-length sequence into a fixed-size representation, which is particularly suitable for neural networks. It also has an appealing property that the far-away context will be gradually forgotten in the representation, which is good to model natural languages.

We have applied FOFE to feedforward neural network language models (FNN-LMs). Experimental results have shown that without using any recurrent feedbacks, FOFE based FNNLMs can significantly outperform not only the standard fixed-input FNN-LMs but also the popular RNN-LMs.

Reference:

[1] S. Zhang, H. Jiang, M. Xu, J. Hou, L. Dai, “A Fixed-Size Encoding Method for Variable-Length Sequences with its Application to Neural Network Language Models,” arXiv:1505.01504.

[2] S. Zhang, H. Jiang, M. Xu, J. Hou, L. Dai, “The Fixed-Size Ordinally-Forgetting Encoding Method for Neural Network Language Models,” Proc. of The 53th Annual Meeting of the Association for Computational Linguistics (ACL 2015), July 2015.

Software:

The matlab codes to reproduce the results in [1,2] can be downloaded here.