This is an old revision of the document!

Semantic Word Embedding

Semantic Word Embedding

In this project, we propose a general framework

to incorporate semantic knowledge

into the popular data-driven learning process

of word embeddings to improve the

quality of them. Under this framework,

we represent semantic knowledge as many

ordinal ranking inequalities and formulate

the learning of semantic word embeddings

(SWE) as a constrained optimization

problem, where the data-derived objective

function is optimized subject to all

ordinal knowledge inequality constraints

extracted from available knowledge resources

such as Thesaurus and Word-

Net. We have demonstrated that this constrained

optimization problem can be efficiently

solved by the stochastic gradient

descent (SGD) algorithm, even for a large

number of inequality constraints.

Reference:

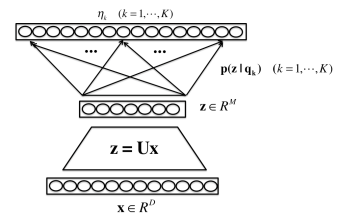

[1] Shiliang Zhang and Hui Jiang, “Hybrid Orthogonal Projection and Estimation (HOPE): A New Framework to Probe and Learn Neural Networks,” arXiv:1502.00702.